刘洲

Master of Artificial Intelligence

South-central Minzu University

About Me

Zhou Liu is a master’s graduate student at the Engineering Center Laboratory of South-Central University for Nationalities. His research interests include diffusion models, image editing, and 3D reconstruction.

- Diffusion Model

- Image Editing

- 3D Reconstruction

Master in Computer Science, 2022

SCUEC

Featured Publications

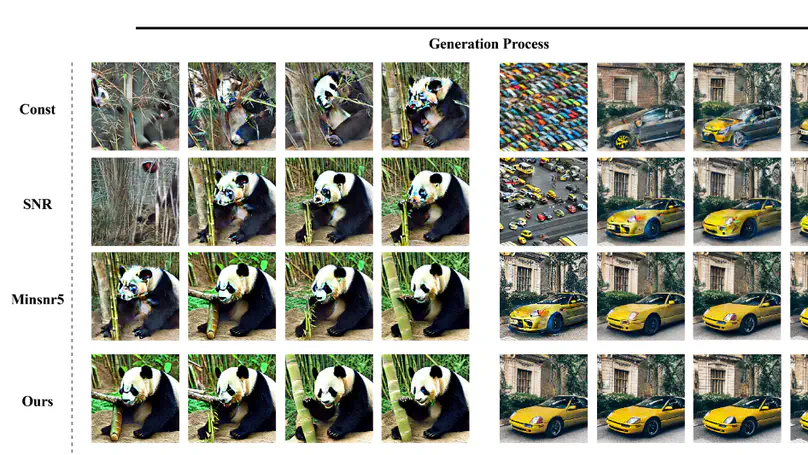

Recently, text-guided diffusion models have demonstrated outstanding performance in image generation, opening up new possibilities for specific tasks in various domains. Consequently, there is an urgent demand for training diffusion models tailored to specific tasks. The prolonged training duration and sluggish convergence of diffusion models present a formidable challenge when expediting the convergence process. Furthermore, there is still room for improvement in existing loss weight strategies. Firstly, the predefined loss weight strategy based on SNR (signal-to-noise ratio) transforms the diffusion process into a multi-objective optimization problem. However, reaching Pareto optimality under this strategy requires considerable time.In contrast, the unconstrained optimization weight strategy can achieve lower objective values, but the fluctuating loss weights for each task result in unstable changes, leading to low training efficiency. In this paper, we propose a new loss weight strategy, Dy-Sigma, that combines the advantages of predefined and learnable loss weights, effectively balancing the gradient conflicts in multi-objective optimization. Experimental results demonstrate that our strategy significantly improves convergence speed, being \textbf{3.7 times} faster than the Const strategy.

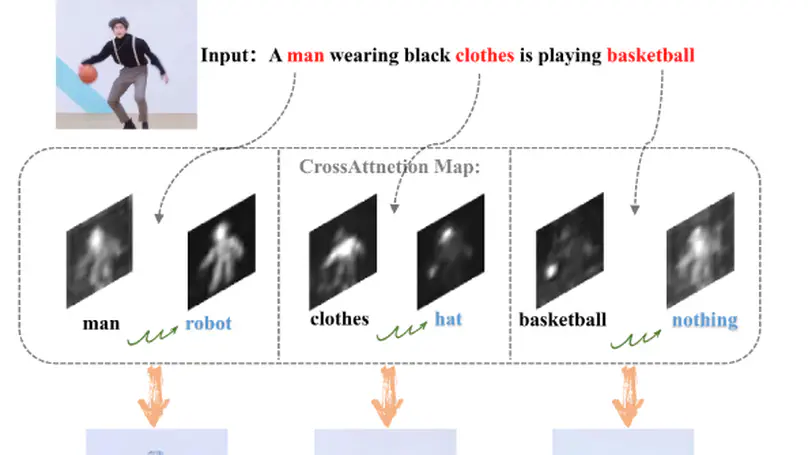

Recently, text-guided diffusion models have shown remarkable effectiveness in image editing. However, there is still substantial room for improvement in editing real images outside the model’s domain. Firstly, addressing OOD (out-of-domain) issues typically requires an extended training process to achieve knowledge embedding. Secondly, achieving high-fidelity edits in localized areas of real images while maintaining background consistency poses a considerable challenge. Therefore, we propose an innovative text-driven framework for real image editing that requires no masking or fine-tuning.Our method excels in several aspects. Firstly, we adopt an intuitive text-driven editing method without the need for additional information. Secondly, by freezing the diffusion model and training only an error corrector, we address the issue of cumulative error during the inversion process, preserving the rich prior knowledge of the diffusion model and avoiding catastrophic forgetting. Lastly, we introduce a single-step linear optimization algorithm to reduce the training cost in the early stages of editing.The experimental results indicate that our method surpasses other latest approaches on multiple metrics, and qualitative comparisons demonstrate outstanding fidelity and controllability in our editing results. This provides an efficient and powerful solution for text-driven real-image editing. You can find our code here.